When the command scheduler issues a memory command or kernel-execution command, say C, it records the memory objects that are written by the command C in a list called written-memory-object list. Instead, the SnuCL runtime solves this problem by executing kernel-execution and memory commands atomically in addition to keeping the most up-to-date copies using the device list. However, this introduces significant communication and computation overhead in the cluster environment if the degree of sharing is high. The host updates the original memory object with the diffs. Each node that contains a writer device performs the comparison and sends the result (e.g., diffs) to the host that maintains the original memory object. One solution to this problem is introducing a multiple writer protocol that maintains a twin for each writer and updates the original copy of the memory object by comparing the modified copy with its twin.

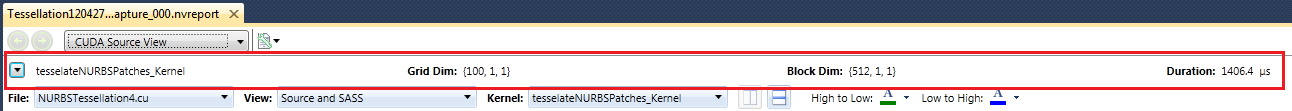

CUDALAUNCH EXAMPLE UPDATE

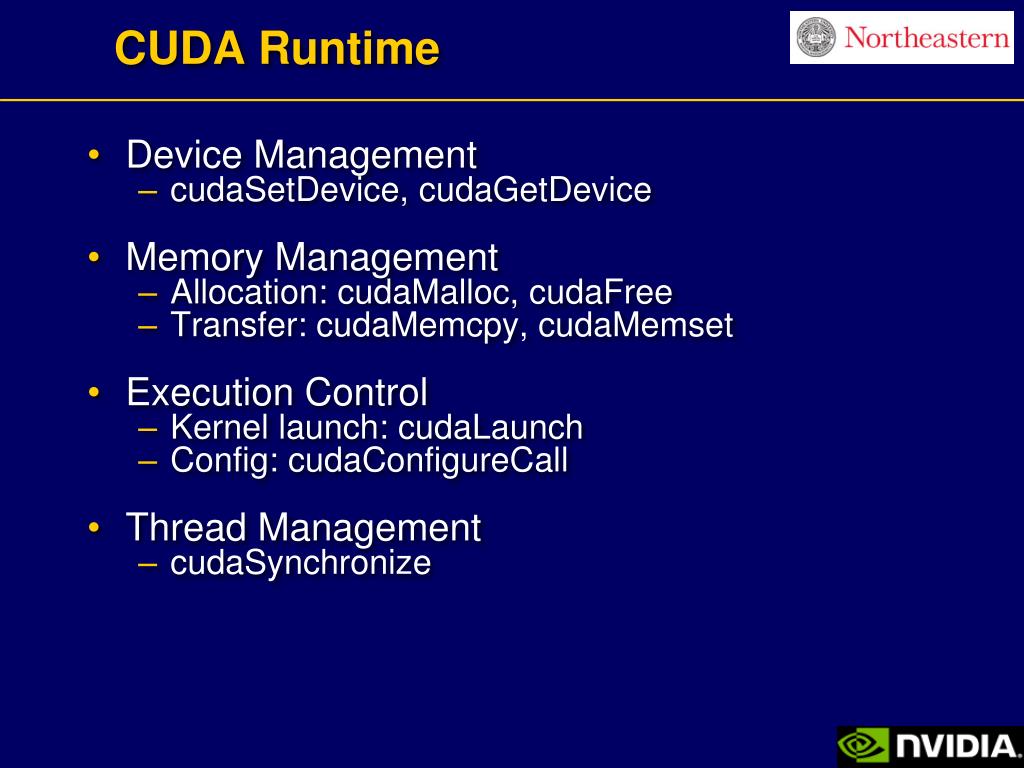

However, when they update different locations in the same memory object, the case is similar to the false sharing problem that occurs in a traditional, page-level software shared virtual memory system. If they update the same set of locations in the memory object, we may choose any copy as the last update for the memory object according to the OpenCL memory consistency model. In OpenCL, multiple kernel-execution and memory commands can be executed simultaneously, and each of them may access a copy of the same memory object (e.g., a buffer). Park, in Advances in GPU Research and Practice, 2017 4.4 Consistency Management This allows the other warps to wait for long-latency operations without slowing down the overall execution throughput of the massive number of execution units. At any time, the SM executes instructions of only a small subset of its resident warps. All threads in a warp have identical execution timing. Once a block is assigned to an SM, it is further partitioned into warps. For each kernel, one or more of these resource limitations can become the limiting factor for the number of threads that simultaneously reside in a CUDA device.

For example, each CUDA device has a limit on the number of thread blocks and the number of threads each of its SMs can accommodate, whichever becomes a limitation first. Each CUDA device imposes a potentially different limitation on the amount of resources available in each SM. Threads are assigned to SMs for execution on a block-by-block basis.

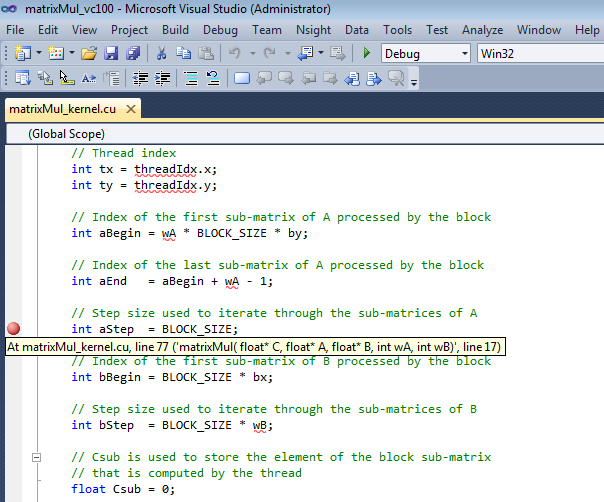

To allow a kernel to maintain transparent scalability, the simple way for threads in different blocks to synchronize with each other is to terminate the kernel and start a new kernel for the activities after the synchronization point. The transparent scalability comes with a limitation: threads in different blocks cannot synchronize with each other. Once a grid is launched, its blocks are assigned to SMs in arbitrary order, resulting in transparent scalability of CUDA applications. This model of programming compels the programmer to organize threads and their data into hierarchical and multidimensional organizations. It is the programmer’s responsibility to use these variables in kernel functions so that the threads can properly identify the portion of the data to process. Unique coordinates in blockIdx and threadIdx variables allow threads of a grid to identify themselves and their domains of data. The kernel execution configuration defines the dimensions of a grid and its blocks. Hwu, in Programming Massively Parallel Processors (Second Edition), 2013 4.8 Summary